Brands with strong traditional SEO rankings are getting skipped by ChatGPT, Perplexity, and Google’s AI Overviews. Not because their content is bad, but because it’s structured for a retrieval system that AI pipelines don’t use. Four days at SEO Week NYC 2026 laid out exactly what’s broken and what fixes it.

You’ve likely watched this play out already: rankings hold, but traffic from AI-generated answers goes to competitors. Or a prospect mentions they “looked it up” before calling, more recently meaning they asked an AI. The problem isn’t your SEO. It’s that the signals AI systems use to decide what to cite are often different than the signals that determine traditional rankings, and most brand sites haven’t been built for them yet.

AI Systems Filter Out Most Content Before They Ever Read It

AI retrieval pipelines evaluate content through a series of eligibility checks before a model ever sees it. Content that fails those checks can’t be cited, regardless of its quality or ranking. Krishna Madhavan, Principal Product Manager at Microsoft AI, opened the conference by describing what he called the “invisible, converged web”: a layer of grounding confidence scores, safety filters, publisher controls, and attribution signals that sits between your content and the AI systems your audience is using. If your content doesn’t carry the right signals, it gets filtered out at that layer. It never reaches the model.

This is the structural gap most brands don’t know they have. A page can rank on the first page of Google and be completely absent from AI-generated answers on the same topic, because ranking signals and retrieval eligibility signals are different. Google evaluates pages. AI pipelines evaluate whether individual content blocks are structured, sourced, and verified enough to ground a response. Madhavan’s framing was precise: modern SEO and GEO now feel less like ranking tactics and more like a distributed systems challenge, where the goal is coordinating the signals that let AI pipelines trust and reuse your content.

In every GEO audit we run at Oomph, most brand sites are missing at least two of those signals. The most common gaps are invisible in standard SEO tooling, which is exactly why brands with strong traditional performance are still surprised when their AI citation share is near zero.

Traffic Metrics Will Lie to You About Your AI Search Performance

AI Overviews, ChatGPT, and Perplexity are answering questions in your category and not sending traffic to your site, but your analytics won’t show you that as a problem, because there’s no traffic to track. Jori Ford, Chief Marketing and Product Officer at FoodBoss, introduced the HEO (Hybrid Engine Optimization) framework at SEO Week specifically to address this measurement gap. Her Hybrid Engine Score is a weekly composite that tracks both traditional ranking performance and AI citation performance in a single number. Measuring them separately, or measuring only one, gives you an incomplete and often misleading picture of your actual search visibility.

Dale Bertrand of Fire&Spark extended this into the CFO conversation that most marketing leaders are currently losing. If your traffic is down but AI-influenced conversions are up, you’re actually winning. GA4 misses most AI-driven attribution, so you look like you’re failing. Bertrand’s work with global brands showed that revenue-focused GEO consistently produces stronger business outcomes than traffic-focused SEO when you measure far enough downstream. The brands building that measurement and implementation frameworks now are the ones who’ll be able to defend AI search investment in 12 months, when leadership starts asking why organic traffic hasn’t recovered.

“Revenue-focused GEO consistently produces stronger business outcomes than traffic-focused SEO.”

A Weak Paragraph Loses to a Strong One Every Time

AI systems retrieve at the paragraph level, not the page level, which means every paragraph on your site now competes independently to be cited. Mike King’s session was the most direct of the conference on this point. His framing: Google has been operating semantically for over a decade, most SEO tooling still does keyword math, and the gap between what tools measure and what AI systems actually evaluate has become the opportunity for brands willing to close it. A well-structured, well-evidenced paragraph on a thinner site gets cited ahead of a buried, unfocused paragraph on a high-authority domain.

The practical consequence for your content team is specific. Paragraphs need to open with their conclusion, not build toward it. Each paragraph should address one provable idea with enough context that it can be extracted and stand alone. Sourcing needs to be explicit and named. An unnamed statistic is an assertion an AI system can’t ground.

The brands we work with who’ve rebuilt their content architecture around these requirements are seeing measurable improvement in AI citation rates within 60–90 days. That improvement shows up in AI Overview appearances and third-party platform citations before it shows up in traffic numbers.

Four Technical Requirements Now Sit Between Your Content and AI Citation

Structured, sourced, crawlable, and machine-readable content gets cited by AI systems. Content missing any one of those properties gets filtered before retrieval. Andrea Volpini, CEO of WordLift, described the staged retrieval process AI systems use: models don’t consume your site whole, they pull from a pre-filtered subset of content that met a minimum bar for structure and verifiability. Content that isn’t structured, connected, and verifiable gets excluded from that subset, regardless of how good it is as writing.

The four technical requirements that determine whether your content clears that bar are: AI crawler access confirmed in your robots.txt; schema markup that is complete, accurate, and present on key pages; an llms.txt file that correctly tells AI agents what they can use from your site; and content blocks written so individual paragraphs can stand alone as cited answers. In every GEO audit we run at Oomph, most brand sites are missing at least two. None of these are advanced configurations. They’re the new baseline for being retrievable. Platforms like Scrunch can show you exactly where you stand across ChatGPT, Perplexity, Gemini, and Google AI Overviews: which prompts surface your brand, which source pages are driving citations, and where competitors are getting cited in your place.

The Brands That Close This Gap First Will Be Significantly Harder to Displace

AI citation compounds the same way that traditional search authority compounds. Brands that establish consistent citation history build a signal advantage that takes competitors real time to close. The difference from traditional SEO is that the window to build that advantage early is shorter, because the field is moving fast and the gap between brands actively building for AI retrieval and brands waiting to see how it develops is widening every month.

The sequencing for closing that gap is straightforward. Technical access for AI crawlers comes first, because you can’t be cited if you can’t be read. Schema markup and structured data come next, because they’re the verification signals AI systems use to trust your content.

Passage-level content architecture follows. Reformatting existing strong content for standalone paragraph retrieval is often faster than creating new content. Third-party brand presence on the platforms AI models train on comes last, because it’s the authority signal that determines whether an AI system treats your content as a credible source or skips it in favor of one it recognizes.

At Oomph, we run GEO audits that score your site across all four of these dimensions and return a prioritized 30-day action plan. If you’re not sure where your brand stands on AI visibility, the audit tells you exactly which gaps are costing you citations right now. Talk to us about a GEO audit.

The DOJ just handed organizations an extra year on their WCAG compliance deadline, but that doesn’t mean the work stops. If anything, it’s a signal to accelerate.

On April 20, 2026, the Department of Justice issued an Interim Final Rule extending the ADA Title II web accessibility deadline by one year. State and local government entities serving populations of 50,000 or more now have until April 26, 2027, to achieve full WCAG 2.1 Level AA conformance. Smaller jurisdictions have until April 26, 2028. For organizations that have already been tracking Oomph’s earlier breakdown of the WCAG compliance landscape, this is the latest update, and the stakes are higher than the extension might suggest.

The Extension Doesn’t Change the Underlying Obligation

The DOJ’s rule revision pushes a deadline. It doesn’t remove one. WCAG 2.1 Level AA remains the legal standard under the ADA, and the obligation to make digital content accessible to people with disabilities has been codified since the original Title II rule was finalized in April 2024. The extension was issued in response to documented capacity constraints; some organizations were struggling with the scope of remediating thousands of PDFs and auditing vendor platforms. But organizations that have paused work in anticipation of a rollback shouldn’t interpret this as a signal to wait longer.

Private litigation hasn’t paused. ADA Title III lawsuits, which apply to private businesses, saw a 102% increase in recent years and aren’t tied to the DOJ’s enforcement calendar.

What the New Deadlines Mean for Your Organization

The updated compliance schedule breaks down by entity type:

- State and local governments (50,000+ population): April 26, 2027

- State and local governments (under 50,000 population) and special districts: April 26, 2028

- Private organizations that contract with or provide digital services to public entities: bound by the deadlines of their government partners, with contractual accessibility provisions becoming standard in procurement

If you work with public sector clients, including healthcare systems, universities, or municipalities, your contracts will increasingly reflect these requirements. Vendors are legally responsible for the accessibility of the tools and platforms they provide to covered entities. An extended deadline for your client doesn’t reduce your exposure.

Why an Extra Year Is Actually an Opportunity

Organizations that treat this extension as breathing room will spend it the same way they spent the last two years: waiting. The ones that use it intentionally will close the gap permanently.

True WCAG compliance requires more than fixing what’s broken today. It means building accessibility into your content production process, your procurement checklist, and your development workflow so that new content is compliant from the moment it’s published. There’s no grandfathering for content published after the compliance date; anything that goes live after your deadline has to meet the standard on day one.

The extension also offers something the original deadline didn’t: time to do the audit properly. A comprehensive audit, one that combines automated scanning with manual testing and includes real users with disabilities, takes time to execute and even more time to act on. Organizations that use this year to conduct a thorough audit, triage findings by risk, and implement remediation systematically will be in a fundamentally stronger position than those who rush a surface-level fix in the final weeks before a hard deadline.

What to Prioritize Now

The compliance work that matters most isn’t complicated, but it does require deliberate sequencing. Start with an honest inventory of what you have: web pages, PDFs, forms, video content, mobile applications. Identify which assets carry the highest user traffic and the highest legal exposure. That’s where remediation starts.

From there, the priorities are consistent regardless of organization type:

- Audit your highest-traffic pages and mission-critical digital services first.

- Address the underlying code. Overlay widgets don’t satisfy the standard and have been explicitly called out by the DOJ as insufficient.

- Review vendor contracts and confirm that third-party platforms in your digital ecosystem meet WCAG 2.1 Level AA.

- Build an accessibility policy that defines ownership, sets standards for new content, and creates a process for users to report issues.

- Train staff who create and publish digital content, not just developers.

The organizations that will find April 2027 manageable are already working through this list. The ones who don’t have 12 months to close a gap that was supposed to be closed already.

The Bigger Picture Hasn’t Changed

The DOJ’s extension is administrative. The underlying direction of travel toward universal, codified digital accessibility standards has been consistent for years and isn’t reversing. WCAG 3.0, expected no earlier than 2028, will shift from a pass/fail model to a tiered scoring system with Bronze, Silver, and Gold levels. Organizations that achieve full WCAG 2.1 Level AA conformance now will enter that transition from a position of strength.

Healthcare organizations that don’t structure their content for AI retrieval are already losing patients before the first visit. Tools like ChatGPT, Perplexity, and Google’s AI Overviews have become a first stop for health questions. They pull answers directly from web content without sending users to a website. If your organization’s content isn’t structured to show up in those answers, you’re invisible at the moment patients and caregivers are most actively searching.

Answer Engine Optimization (AEO) is the practice of structuring content so AI systems can find it, understand it, and cite it. It’s distinct from traditional SEO, though the two aren’t in conflict. Understanding the difference matters for every healthcare communicator making content decisions right now.

AI Search Engines Retrieve and Synthesize, They Don’t Rank and Link

Traditional SEO optimized full pages for rankings. A page with strong domain authority, good keyword coverage, and solid backlinks would surface near the top of a results page. Users would click through to read it. That model still works for many queries, but it’s no longer the whole picture.

If you’re weighing how SEO and generative engine optimization fit together, the distinction is worth understanding clearly.

AI answer engines don’t rank pages. They retrieve specific passages from across the web, synthesize an answer, and present it directly to the user. The user often never clicks through to the source. According to SparkToro’s 2024 zero-click search study, nearly 60% of Google searches end without a click. For healthcare communicators, that means a significant portion of your potential audience is forming opinions about their health, their options, and their providers without ever landing on your site. AI-generated answers accelerate that trend.

Content strategy decisions right now should account for whether your content is structured so AI systems can extract a clear, direct answer from it, not just whether it ranks.

Healthcare Authority Helps, But Structure Is What Gets You Cited

Health information is a high-stakes category for AI systems. Google classifies health, finance, and legal content as YMYL (Your Money or Your Life) because inaccurate answers carry real consequences. AI systems tend to be more selective about which sources they retrieve and cite in these categories.

That selectivity works in favor of established healthcare organizations. Hospitals, health systems, and credentialed clinics carry demonstrated authority, and that matters more in YMYL retrieval than in general content. But authority alone isn’t enough. Content still has to be structured correctly to be extracted. A well-credentialed source with poorly structured content will lose to a less-credentialed source that’s written in a way AI systems can parse.

Most healthcare organizations already have the credibility AI systems favor. That means the path to better retrieval runs through content structure, not authority-building.

What AI Systems Actually Look For in Content

AI retrieval systems evaluate each paragraph independently, treating it as a standalone candidate for citation. A page with a strong introduction and weak middle sections will have the strong introduction cited and the rest ignored. This changes how content needs to be written.

Passages that get retrieved share a common structure. They open with a direct, declarative answer to a specific question. They use plain language rather than jargon. And they don’t require surrounding context to make sense.

A paragraph that opens with “There are many factors to consider when evaluating treatment options” is hard for an AI system to use. A paragraph that opens with “Most patients with early-stage [condition] have three primary treatment options” gives the system something it can extract and cite directly. That’s the foundation of citation-ready content architecture, and it’s the standard healthcare organizations should be building toward.

Schema markup also plays a meaningful role. Structured data signals to AI systems how to categorize and use your content. Three schema types matter most for healthcare organizations: FAQ schema for patient question pages, MedicalCondition schema for clinical content, and HowTo schema for procedural or instructional pages. Organizations that have implemented structured data on their clinical and service pages have a measurable advantage in AI retrieval over those that haven’t.

The Patient Journey Now Runs Through AI Before It Reaches You

Patients and caregivers typically begin with a question typed into an AI tool or search engine, well before they consider visiting a specific organization’s website. By the time they reach your site, they’ve already formed an understanding of their condition, their options, and what they’re looking for based on whatever content those tools surfaced.

Whether your organization is part of that pre-visit understanding depends entirely on whether your content was present in the AI’s answer. If it wasn’t, a competitor’s content filled that space instead.

For healthcare marketers, showing up in AI answers is about whether your organization is part of the conversation patients are having before they ever contact you. That matters well beyond traffic metrics.

Where to Start: Four Practical Priorities

Most healthcare organizations don’t need to rebuild their content from scratch. They need to identify where their existing content is close to being retrievable and close the gap. Four areas consistently make the biggest difference.

Audit your highest-traffic clinical and service pages for passage structure. Read the first sentence of every paragraph on each page. If those sentences don’t directly state the main point of that paragraph, the content isn’t structured for AI retrieval. Rewriting opening sentences to lead with the conclusion is often the fastest improvement available.

Build out FAQ content with direct, complete answers. FAQ pages are one of the most reliably retrieved content formats in AI search because they’re structured around specific questions with discrete answers. Healthcare organizations that publish clear FAQs on common patient questions, symptoms, procedures, recovery, cost expectations, give AI systems exactly the format they’re looking for.

Implement structured data on clinical pages. If your web team hasn’t added schema markup to your clinical and service pages, that’s a near-term technical priority. The implementation isn’t complex, but it requires coordination between your content team and whoever manages your CMS.

Prioritize topical depth over topical breadth. AI systems favor sources that demonstrate consistent depth on a topic over sources that cover many topics superficially. For healthcare communicators, this means investing in comprehensive content on your core service lines rather than spreading thin across every health topic your organization touches.

The same characteristics that make content useful for AI retrieval, clear structure, direct answers, demonstrated depth, make content better for human readers too. Raising the standard in one area raises it across the board.

Oomph will be at NESHCo May 27–29 in Burlington. If you’re headed there too, we hope to see you.

Structured content distribution is the decoupling of content from presentation through a headless CMS and Content as a Service (CaaS) architecture. It is a sound strategy for organizations managing complex content distribution networks across multiple channels.

To be the most successful, this digital transformation requires organizations to change both their publishing workflows and their content ownership structures. Governance complexity affects 41% of CaaS adopters (PDF), workflow mismatches impact a third, and training requirements average 14 to 18 weeks.

We have implemented these systems for clients in healthcare, financial services, and higher education, and the pattern is consistent: the three failures that kill structured content initiatives are the preview gap, the ownership vacuum, and the training deficit. Here is what we have learned about each one — and what actually works.

The Promise

The pitch for structured content distribution is compelling: create content once, store it as modular data in a headless CMS, deliver it via API to any channel (web, mobile, kiosks, AI agents) without reformatting. The CaaS market is projected to reach $2.8 billion by 2035, and over 65% of enterprises have adopted headless CMS architectures.

What they do not tell you is that integration challenges affect 46% of adopters using legacy CMS platforms, and that 31% of enterprises encounter deployment delays exceeding six months. The technology works, but the governance requires just as much attention and is often overlooked. We have seen this avoidable pattern repeat across many structured content implementations.

Why Do Structured Content Migrations Stall?

In short, because organizations implement the technology without redesigning how their teams create, review, approve, and own content. That’s the governance problem.

A headless CMS decouples content from presentation. But most editorial teams have spent years, sometimes decades, working in systems where creating content and seeing how it looks are the same activity. WordPress, Drupal, and even SharePoint have a visual editing experience: build a page, see the page, publish the page.

Structured content does not work this way. Authors fill in fields like title, body, metadata, and related entries to publish content objects, not pages. As one analysis of Contentful’s editorial interface notes, “content editors work in structured content entry forms without seeing how content will render in production.” The front-end determines how those objects appear to users.

That architectural distinction is the correct one for consistent omnichannel delivery. It is also the one most likely to break editorial workflow expectations when teams do not deliberately plan for this big shift. In our experience, three governance failures account for the vast majority of structured content stalls.

What Is the Preview Gap, and Why Does It Derail Teams?

The preview gap is the loss of visual context that editorial teams experience when moving from a WYSIWYG (what you see is what you get) environment to a structured content interface, and it is the most immediate friction point in any headless CMS migration.

Authors who previously built pages visually are now filling in form fields and trusting that a front-end will render them correctly. The shift from “building a page” to “managing a content object” takes adjustment, and “once teams adapt, the structured approach tends to produce more consistent, reusable content.” The problem is what happens before they adapt.

What happens is that authors create workarounds. They paste formatted content into rich text fields, breaking the structured model. They submit tickets to developers asking “what will this look like?” multiple times per week. They maintain shadow documents in Google Docs so they can see their work in context. Every workaround is a governance failure — content that exists outside the system, formatting that undermines the content model, and developer time consumed by preview requests instead of feature development.

The planning that pays off includes building live preview environments for as many content sources as possible. This development work typically gets deprioritized because it is not user-facing, but it determines the success of the new system. As one migration guide puts it, headless platforms deliver excellent editorial experiences “when configured correctly — visual editing, live preview, flexible page-building, role-based permissions. But that configuration is work, it doesn’t happen by default.” Budget for it, build it first, and do not launch editorial access without it.

What Is the Ownership Vacuum?

The ownership vacuum is what happens when structured content crosses departmental boundaries without clear governance over who maintains the content model, who approves changes to shared components, and who is accountable when content is reused in a context the original author never intended.

In a traditional CMS, the marketing team owns the marketing pages, the product team owns product pages, etc. Structured content breaks this model deliberately — a product description created once might appear on the website, in a mobile app, in an email campaign, and through a chatbot simultaneously. But governance complexity affects 41% of CaaS adopters, and multi-team collaboration across 6 to 10 departments increases governance overhead by 27%.

Questions seldom asked include:

- When the compliance team changes a regulatory disclaimer, who is responsible for verifying that the change renders correctly across every channel consuming that content object?

- When marketing adds a field to the product content type, who assesses the downstream impact on the mobile app and the support knowledge base?

We have seen organizations discover these questions six months post-launch, usually during a content audit that reveals inconsistencies no one can trace. In regulated industries — healthcare, financial services, higher education — those inconsistencies are compliance risks.

Knowing these pitfalls ahead of time can lead to the establishment of a content model governance board before migration begins. A small, cross-functional group (typically 3 to 5 people spanning content strategy, development, and compliance) owns the content model as a shared organizational asset. They approve changes to content types, evaluate reuse implications, and maintain a living inventory of where shared content objects appear. This role does not exist in traditional CMS organizations because it’s not needed. But in structured content environments, it is absolutely necessary.

Why Does the Training Deficit Compound Everything?

Because organizations allocate 90% of their transformation budgets to technology and implementation, and only 10% to change management — the part that determines whether anyone actually uses the system they built.

Training requirements for CaaS implementations average 14 to 18 weeks, the elapsed time from initial exposure to genuine editorial fluency. This training creates the confidence for authors to create, structure, and publish content without reverting to old habits or filing developer tickets. Most implementation budgets account for a one-day training session and a knowledge base article. The gap between that and actual fluency is where adoption dies.

The compounding effect of the training deficit makes this particularly damaging. Undertrained authors hit the preview gap and panic. Without clear governance ownership, there is no one to answer their questions authoritatively. They build workarounds. Those workarounds corrupt the content model. The corrupted content model undermines the case for structured content. Stakeholders lose confidence. The transformation stalls.

BCG’s study of 850+ companies found that only 35% of digital transformations meet their value targets globally. The failure rate is a change management problem that looks like a core problem with the technology itself.

To avoid this failure spiral, structure editorial onboarding as a phased engagement, not a one-and-done event. In our implementations, we start with a pilot group of 3 to 5 authors working with the system while the front-end is still being built. They surface friction points the development addresses in real-time. When the broader editorial team is onboarded, the common pain points have been resolved, and the pilot group serves as advocates who can answer questions and support their peers. This approach adds little cost and dramatically improves adoption velocity.

What Should Organizations Do Before Starting a Structured Content Migration?

Treat governance design as a foundation to build a successful digital transformation:

- Audit your editorial workflows as they actually operate. Map who creates content, who reviews it, who approves it, and where informal workarounds exist. As one migration planning guide advises, most publishing workflows “are often based on legacy systems, informal approvals, or staff availability. The result? Delays, missed steps, and content that never quite gets finished.” Your structured content governance must account for the real workflow, not the theoretical one.

- Define content model ownership before selecting a platform. Determine who will own the content model as an organizational asset, who can request changes, and what the approval process looks like. This governance structure should be platform-agnostic — it is an organizational decision, not a technical one. We have helped clients build this through our roadmapping and strategy engagements, and it consistently reduces mid-project governance confusion.

- Budget for editorial experience parity. If your authors currently have WYSIWYG editing, live preview, and visual page building, do not assume they will accept a simpler and more limiting form-based interface. Calculate the development effort required to provide contextual preview in your new architecture and include it in the implementation scope, not as a phase-two enhancement. Phase two rarely arrives before editorial frustration does.

Wrap Up

The CaaS pitch is not wrong. Structured content distribution is the right architecture for organizations publishing across multiple channels, and it is increasingly the right architecture for AI readiness — structured data is what AI systems consume most effectively. But the promise underestimates the organizational effort to make it successful.

Technology is the easy part. Governance, training, and editorial adoption are harder, and that is where implementations succeed or fail.

We have built these systems on Contentful, Drupal, and composable architectures for organizations in regulated industries where getting content wrong has real consequences. The lesson we keep relearning is the same one: start with the team, not the platform.

Summary

On April 20, 2026, the Department of Justice extended ADA Title II web accessibility compliance deadlines by one to two years for state and local government entities. The extension does not pause underlying accessibility obligations, and it does not extend the separate HHS Section 504 deadline that may apply to hospitals and nonprofits receiving federal funding. Organizations tracking a single calendar are exposed to what we call the two-deadline trap. The right response is to use the extension to systematize accessibility, not to defer it.

This article is not legal advice. Confirm which rules apply to your organization with qualified counsel.

Four days before the original compliance date, the DOJ reset the clock.

Per a summary from Jackson Lewis, state and local governments with populations over 50,000 now have until April 26, 2027 to comply with WCAG 2.1 Level AA under Title II. Smaller entities and special districts have until April 26, 2028.

If you run digital for a public entity, exhale. If the extension made you slow down, recalibrate. The deadlines moved, but the risk did not.

What actually changed, and what did not?

The DOJ pushed back the date that specific technical requirements become enforceable.

What did not change: the underlying ADA obligation to provide accessible programs and services. Title III public-accommodation risk for hospitals, providers, and nonprofits is unaffected. Demand letters and accessibility-related litigation continued straight through the extension announcement; they did not pause for it.

The compliance date is a deadline, not a start date. Organizations that wait will spend the extension period accumulating debt in templates, content, and vendor contracts, then attempt to remediate it in a sprint. That sprint is where the avoidable risk lives.

Why doesn’t the extension help hospitals and nonprofits?

The DOJ rule covers state and local government entities. It does not cover hospitals and nonprofits whose accessibility obligations come from a different source: federal financial assistance under Section 504 of the Rehabilitation Act.

Jackson Lewis notes that HHS has a separate Section 504 web accessibility compliance date, and as of this writing it has not been extended. Until HHS acts, plan as if it holds.

If your organization receives HHS funding, operates patient portals, runs scheduling or billing flows, accepts donations online, or hosts learning and event platforms, your timeline is likely shorter than the DOJ headline suggests. Title III public-accommodation exposure runs alongside it.

What is the two-deadline trap?

The two-deadline trap is the assumption that a single, well-publicized accessibility deadline is the only one that applies to your organization.

It happens when leadership tracks the DOJ Title II extension and treats it as the program’s primary clock, while a separate Section 504 or Title III obligation governs the actual exposure. The result is a roadmap pegged to the wrong date and a remediation budget that arrives late.

Avoiding it requires confirming, in writing and with counsel, which rules apply, which deadlines govern, and which user-facing services fall inside each scope.

What does day-one compliance actually look like?

Day-one compliance is the day your organization can demonstrate that new content is published accessibly, high-impact user flows work with assistive technology, vendors are managed as part of your posture, and governance is in place.

In our experience working with regulated organizations, the failure mode is rarely the homepage. It is the publishing system that keeps creating new accessibility debt — new pages, new PDFs, new embedded forms, new third-party widgets — faster than remediation can clear it. A defensible program stops the inflow before it works down the backlog.

That means accessibility moves upstream into design system components, CMS templates, content briefs, QA gates, and vendor intake. “Archived content” stops being a folder name and becomes a governance decision with rules. Procurement language changes so the next contract renewal does not lock in another year of vendor risk.

Will an accessibility overlay protect you?

No. Overlays can adjust some visual and interaction settings for some users, but they do not remediate the underlying barriers in your templates, components, content, or third-party tools. The Overlay Fact Sheet, signed by hundreds of accessibility practitioners and organizations, documents the consensus position.

If a widget is your strategy, assume you still need code-level fixes in templates, manual testing with assistive technology, content authoring training, and a third-party tool plan. The widget is not a substitute for any of those, and a number of overlay vendors have themselves been named in accessibility lawsuits.

What should accessibility leaders do this week?

Five actions, in order.

- Confirm which rules apply, and which deadline governs. Title II, Section 504, Title III, or more than one. If there is uncertainty, this is a counsel question, not an internal one.

- Name a single accessibility owner. Not a committee, but one person responsible for coordinating across IT, content, legal, and procurement. Accountability is the program.

- Test your top five user-critical flows manually. Forms, authentication, scheduling, payments, donations, patient portal — whatever blocks access to your primary services. Manual keyboard-only and screen reader software spot checks find what automated scanners miss.

- Inventory third-party tools and audit their contracts. Where contracts are silent on accessibility, flag them as priority renewals. Your compliance posture runs through every embedded vendor whether the contract says so or not.

- Write a 90-day plan and share it with leadership. Specific, resourced, and tracked beats comprehensive and aspirational every time.

The extension is not a year off. It is a year to put a defensible program in place before the rules apply more explicitly than they already do. Use it wisely.

Bill Gates wrote “Content is King” back in 1996. He was right for about thirty years. On the open web, the winners were the ones who could produce, distribute, and monetize content at scale. That era shaped how we built digital products, how we organized marketing teams, and how we thought about content platforms.

That era is getting a new chapter.

When content becomes context

In the age of agents, content is context. It’s the raw material an AI uses to answer a customer’s question, draft a proposal, summarize a policy, or make a decision on behalf of your business.

If your context is a mess, your agent is a mess. Garbage in, confident-sounding garbage out.

For organizations in healthcare, higher education, and associations (industries where we work every day) that governance layer isn’t a nice-to-have. A health system deploying an agent to answer patient questions needs to know which clinical protocol is current, who approved it, and what the agent is and isn’t allowed to cite. An association managing member benefits can’t afford an agent that surfaces a two-year-old policy document as current guidance. And it’s not just the regulated organizations themselves. The enterprise technology companies that serve these industries, the SaaS platforms, the data providers, the system integrators, face the same challenge: if the content powering their products isn’t structured and governed, the agents built on top of it will inherit every gap. The stakes in regulated industries make the content-as-context problem concrete and urgent, but the same dynamics show up everywhere brand, voice, and accuracy matter: retail pricing, financial disclosures, B2B product specifications, public sector policy. Different risk profiles, same fundamental problem.

This isn’t theoretical. Gartner predicts that 40% of enterprise applications will include task-specific AI agents by the end of 2026, up from less than 5% in 2025. The shift is already moving from prediction to product.

The platforms we work with every day show the movement clearly. The Drupal AI Initiative launched last June and hit $1 million in funding within five months, with the Drupal AI and AI Agents modules reaching production-ready status in October 2025. Acquia built on that foundation with Acquia Source, shipping three AI agents for its Drupal-powered SaaS CMS in December. Contentful open-sourced its MCP server and has been publishing active guidance on agentic content operations. These aren’t experiments. They’re shipping.

Across the category, the pattern is broad. Contentstack launched Agent OS in September 2025 and introduced what it calls the “Context Economy” as its positioning. Kontent.ai shipped what it calls an Agentic CMS the following month. The Model Context Protocol that Anthropic introduced in late 2024 has become the connective tissue, adopted by OpenAI, Google DeepMind, and most of the CMS world.

The platforms are ready. The question is whether your content is.

What agents actually need

An agent doesn’t want a rendered web page. It wants structured, canonical, permissioned, versioned truth. That means:

- Structure so the agent can reason over content rather than scrape through marketing copy

- Versioning so it knows which policy, price, or product spec is current

- Permissions so the agent answering a customer question can’t pull from an internal-only HR doc

- Freshness signals so stale content doesn’t get treated as authoritative

- Governance so legal, brand, and compliance can trust what the agent says on their behalf

That’s the same job a mature content platform has been doing for years, just pointed at a new kind of consumer.

We’ve seen this movie before

Every channel shift exposes whether your content was ever really structured to begin with. CD-ROM, then the web, then mobile, now agents. Each one forces organizations to untangle content from presentation. Headless CMS platforms like Drupal, Contentful, Sanity, and Strapi won that argument. Content as structured data, delivered via API, rendered wherever you need it.

Agents are the most demanding channel yet. They don’t just display your content. They consume it, reason over it, and then take action. If your content is trapped inside HTML blobs or buried in PDFs that no one’s touched since 2021, it’s not ready to be context. Structure is the whole game now.

Where context lives today

Right now, company context is scattered across:

- Websites and headless CMS platforms

- GitHub repos full of markdown

- Confluence, Notion, SharePoint, Google Drive

- Salesforce, HubSpot, and a dozen other systems of record

- PDFs, Slack threads, and somebody’s laptop

Some of these are built for governance. Most aren’t. GitHub is hands-down great for technical content and version control, but marketing and legal teams aren’t opening pull requests to update a pricing page. Notion is excellent for collaboration, weak on structured content models and role-based delivery. Every organization I talk to has some version of this scatter, and it’s about to become a much bigger problem.

The rise of the Context Management System

The old acronym still works. CMS. New job.

Headless CMS platforms have quietly solved about 70% of what agents need. Structured content models. API-first delivery. Editorial workflows. Roles and permissions. Versioning. Audit trails. What they’re adding now is the connective tissue. Acquia is embedding AI agents directly into Drupal-powered workflows through Acquia Source, and Contentful has open-sourced its MCP server to let agents take action on content operations. Across the rest of the category, Sanity launched its Content Agent in January 2026, and Storyblok, Brightspot, and dotCMS have released MCP servers of their own. MCP servers, vector indexing, semantic metadata, agent-optimized delivery endpoints. That’s a much smaller leap than building the whole governance layer from scratch.

The “just throw it all in a vector database” approach has real merit as a retrieval layer. Retrieval is one job. Governance is a different one: who owns canonical truth, who approved the content, when it expires, and who’s allowed to see it. That’s always been the CMS job. It matters more now, not less.

For teams working on Drupal, Contentful, or Acquia Source, this is encouraging. The architectural decisions those platforms made years ago (structured data, granular revisioning, API-first design) turn out to be exactly what AI agents need. Your investment in content architecture is paying off in ways you didn’t plan for. Call it a head start.

What to do about it

If you’re building agentic products, or planning to, the content question is the quiet one that will bite you later. This is the work we’re spending most of our time on with clients right now. A few forward moves:

- Audit where your content actually lives and who owns it. You will be surprised.

- Pick a source of truth for each category of content. Don’t let five systems claim the same ground.

- Get your structured content models right. If your content is trapped inside HTML, it isn’t ready to be context.

- Build the governance layer before you need it. Versioning, permissions, approval workflows. Your legal team will thank you. So will your agent.

- Connect your CMS to your agents via MCP or equivalent. This is how context flows. Do it early.

Content was king when the battle was for attention. Context is king now that the battle is for correctness. Agents are only as good as the material you feed them, and that material has to be managed with the same rigor we’ve applied to code, to data, and yes, to content itself.

The organizations that treat content governance as infrastructure, not a cleanup project, will be the ones whose agents are trustworthy from day one. That window is shorter than it looks.

Summary

Most content strategies optimize for one outcome: ranking. Ranking is only half the visibility equation now. Citation-Ready Content Architecture, developed at Oomph, helps organizations build content that performs across traditional search results and AI-generated answers simultaneously. It rests on three principles – modular structure, demonstrated authority, and extractable specificity – and we apply it with clients in healthcare, higher education, and government where being cited accurately is as important as being found.

This crystallized during a client conversation earlier this year. We were looking at their analytics – a major healthcare organization – and the pattern was striking. Impressions were climbing. Rankings were stable. But clicks were dropping steadily, month over month. The content was being surfaced by Google, but patients were getting their answers from AI Overviews without ever visiting the site.

That’s a visibility problem most of us weren’t trained to solve – and it requires a different content architecture.

Gartner predicts traditional search volume will drop 25% by the end of 2026 as users migrate to AI-powered answer engines. Ahrefs found that 80% of URLs cited by ChatGPT, Perplexity, and Copilot don’t rank in Google’s top 100 for the original query. And the Pew Research Center’s study of 68,879 actual Google searches found that only 8% of users clicked a traditional result when an AI Overview appeared, compared to 15% without one – roughly half the click-through rate.

Content that ranks and content that gets cited aren’t always the same – but they can be, if you build for both from the start. That’s Citation-Ready Content Architecture.

What Is Citation-Ready Content Architecture?

Citation-Ready Content Architecture is the practice of structuring digital content so it simultaneously ranks in traditional search engine results and gets extracted, synthesized, and cited by AI answer engines like ChatGPT, Google AI Overviews, and Perplexity. Developed by Oomph as a framework for regulated industries, it combines modular content structure, demonstrated authority signals, and extractable specificity into a unified content design principle – replacing the need to maintain separate SEO and GEO strategies.

The key word in that definition is “simultaneously.” That means content architecturally designed to work across every discovery surface – ranked results, AI summaries, voice assistants, whatever comes next – because the underlying structure supports all of them.

In our work with clients across healthcare, higher education, and government, we’ve found this transition isn’t a massive lift for organizations with strong content fundamentals. The gap between SEO-optimized and citation-ready content is structural, not substantive – it’s about how content is organized, not whether it’s good.

Why Do Organizations Need a New Content Architecture Now?

Information discovery has forked. Content built for only one path leaves visibility on the table.

Two parallel discovery systems now exist. Traditional search ranks your content in a list users scan. AI-powered answer engines synthesize information from multiple sources into a single response – often without the user ever clicking through to your site.

The research is unambiguous. The foundational Princeton GEO study demonstrated that content optimized for generative engines can boost visibility by up to 40% in AI responses. But it also showed that the most effective strategies vary by domain – what works for a law firm doesn’t necessarily work for a children’s hospital. A March 2026 study from researchers at the University of Tokyo found that structural optimization alone – independent of content changes – improved citation rates by 17.3% across six major generative engines.

The most striking finding: research from AirOps found that pages ranking number one in Google were cited by ChatGPT 3.5 times more often than pages outside the top 20. Strong SEO remains the foundation. Citation-ready architecture is what makes that foundation legible to AI systems too.

What Are the Three Principles of Citation-Ready Content?

The framework rests on three principles. Each serves both search engines and AI systems simultaneously – that dual purpose is the point.

Modular structure

AI systems don’t read your article start to finish and decide whether to cite the whole thing. They extract passages – a definition, a data point, a direct answer to a specific question. Content with clear headings, self-contained sections, and answer-first paragraphs gives both search algorithms and AI systems clean material to work with.

We’ve written about how LLMs index and use content – and the takeaway is that the same accessibility principles that help AI crawlers parse your pages also make your content more citation-worthy. Semantic HTML, logical heading hierarchies, and sections that can stand on their own aren’t new concepts. They’re just worth more now than they’ve ever been.

Demonstrated authority

Being cited by AI systems has become a meaningful competitive advantage. BrightEdge found that sites earning citations inside AI Overviews see CTR increases of up to 35% compared to traditional organic rankings alone. Websites with author schema are 3x more likely to appear in AI answers, and sites implementing structured data and FAQ blocks saw a 44% increase in AI search citations.

In practice, demonstrated authority means: Author credentials on every piece. Original data and research when you have it. Linked sources for every claim. Topical depth across related content – not one-off articles, but interconnected clusters that demonstrate sustained expertise.

Authority isn’t just a ranking signal – it’s the entry qualification for AI inclusion.

Extractable specificity

This is the one that separates citation-ready content from content that’s merely well-written. AI systems select content that provides extractable facts – numbers, definitions, named frameworks, concrete comparisons. Content that gestures at a topic (“there are many factors to consider”) gets skipped in favor of content that states something specific and citable.

The Princeton study found that adding statistics to content improved AI visibility by 41%, and citing credible sources improved visibility by 115% for lower-ranked pages. That 115% figure is significant: it means content that isn’t winning the traditional ranking game can still earn AI citations by being specific and well-sourced.

How Does This Apply Differently in Regulated Industries?

For regulated industries, the stakes are higher and the timeline compressed – but the structural fit is actually better.

Conductor’s Q1 2026 analysis of 21.9 million searches found that healthcare queries trigger AI Overviews at a rate of 48.75% – nearly double the overall average. For healthcare organizations and universities, AI is already mediating close to half the informational queries that drive patient acquisition and enrollment.

The structural advantage for regulated industries is real. Organizations in regulated industries – healthcare systems, universities, government agencies – produce content that’s inherently tied to their institutional expertise. A hospital publishing evidence-based patient education content is structurally closer to citation-ready than a SaaS company publishing tangentially related blog posts for keyword volume. The authority is real. The specificity is built in by the nature of the content. What’s typically missing is the formatting and schema work that makes it extractable.

When we optimize content for GEO, the biggest wins often come from restructuring content that already exists – not creating new content from scratch.

What Should You Do First to Make Your Content Citation-Ready?

Start with what you have. The gap is almost always structural, not substantive.

- Audit your top 20 pages for extractability. Read the first paragraph of each section in isolation. Does it directly answer a question someone would ask an AI tool? If it doesn’t, restructure it. AI systems pull from the opening sentences of well-structured sections. Bury your answer three paragraphs in and it won’t get cited.

- Implement the schema that AI systems actually use. FAQPage, Organization, Article, and author schema across your priority content. Author schema is especially high-impact – BrightEdge’s research shows it triples your likelihood of appearing in AI answers.

- Track AI visibility alongside traditional rankings. Oomph’s GEO Analytics and Reporting service configures tracking in GA4 and Google Search Console to monitor AI bot traffic and AI-generated search impressions. At minimum, watch for the pattern of rising impressions with declining clicks – that’s the clearest signal that AI is summarizing your content without sending visitors.

- Build for reuse from the start. Every new piece of content should include at least one standalone definition, one specific data point, and one direct answer to a question your audience would ask an AI tool. Make it easy for AI systems to cite you. That’s the architecture.

In 20 years of building digital experiences, I’ve watched a handful of shifts fundamentally change how content needs to be structured. Mobile was one. Accessibility-first was another. The shift to AI-mediated discovery is the next.

Citation-Ready Content Architecture isn’t a bolt-on to your existing strategy – it’s the design principle that makes your existing strategy work across today’s fragmented discovery environment. Organizations that build for it now will compound that advantage as AI-mediated search grows. Those that wait will be optimizing for a world that has already moved on.

We’re helping clients across healthcare, higher education, and government make this shift. If your analytics show that pattern – impressions climbing, clicks dropping – start here.

As direct website traffic decreases and LLMs slurp up text from multiple sources to mix together and redistribute to users, it has never been more important to maintain high-quality online content. A ROT analysis — which stands for Redundant, Obsolete, Trivial — is a framework through which we can evaluate site content to improve it for usability, SEO, retrieval, and GEO.

This is a flexible exercise that can apply to a variety of digital properties: web pages, PDFs, intranets, social media pages, call center databases, support knowledgebases… Anywhere that you, as an organization, are speaking to your audience, you have an opportunity to share knowledge, build trust, and solidify your brand image.

Similarly, ROTten content can mislead users, seed doubt, and damage your reputation.

When you use a ROT analysis to kickstart a content clean-up project, you’re ensuring that users and bots alike find only your latest, clearest, most accurate and relevant information. When done properly, it can even set up your team for better content production and management in the future.

How Oomph Approaches Content ROT Analyses

Every ROT analysis looks a little different depending on the industry, content, and what a particular audience needs.

Make a Plan

Before jumping into dashboards and spreadsheets, we start with a conversation. With any project, we need to understand what problems your organization needs to solve: What’s important to you and your users? Where are you struggling? This is our chance to understand the why behind your content.

As we learn more about what you need, we’ll define what ROT is for your organization. What existing policies do you have in place around archiving old or outdated content? If you don’t have policies, what makes sense for you? What key user journeys should the analysis focus on? We’ll answer these questions and more to make sure we’re going into the analysis with a clear vision of what your content should look like so we can see where it’s missing the mark.

Find the ROT

Let’s get into what ROT looks like specifically and where we look for it.

Redundant means the content communicates information in more than one place. This can result in an inefficient information architecture and messy user paths. There are times duplicate content can be helpful, like when separate task flows require some of the same information. That’s why it’s important to know upfront what journeys are most important to prioritize. In these cases, when the same content shows up in multiple places across a website or app, it’s important to have a method for keeping all content in sync. If it’s possible to edit this content in a single place while distributing it across multiple pages, that can be a great method for maintaining a single source of truth.

Redundant might also refer to several articles written over time that deal with the same topics in similar ways. This can result in the newest content on the topic having its SEO/GEO cannibalized by older content on the same topic. Users might more easily find older content when you want them to find the latest.

Obsolete content includes outdated information, language, and (probably broken) links. This type of ROT is especially damaging when it’s related to products, services, or something users are trying to take action on. It’s important to keep in mind your entire digital landscape; Maybe you’ve updated the content on your main service page, but did you remember to update automated emails, support articles, and meta descriptions? What pages aren’t built directly into a user flow but can still be found by Google?

Consider whether it makes sense to archive or unpublish old content, like past news and events. And consider your audience: Is there a reason users would be looking for a historical record, and is that need strong enough to justify keeping it available? If you do choose to keep outdated information published, make sure that it’s clear to users that the content is old and consider providing a link to the latest version.

Trivial content can be harder to define and is highly subjective based on the organization. This might look like “fluff” pieces shared for the sake of SEO or maintaining a publishing schedule, or excessive marketing language that ultimately doesn’t serve you or your users. It might be low-traffic fine print details that apply to a specific audience who typically finds it another way. Maybe it’s content that is related to but outside of your core business function. You’ll need to make some decisions about what is important to you.

To find ROT, we’ll use a variety of collection and measurement tools. SortSite, Screaming Frog, and Siteimprove can locate broken links, orphaned pages, and other SEO issues. Google Analytics, Hotjar, Contentsquare, and MS Clarity can show common user flows and help identify trivial content. Data from these tools can also prioritize the analysis by surfacing what content is most important to users. If a page gets a lot of traffic, we know that it needs to be clear, up-to-date, and accurate. If a page isn’t visited much, we need to ask whether it should be more highly trafficked, consolidated with higher performing content, or removed.

Deliverables and Next Steps

After all this sorting and evaluating, you might be wondering what you’ll tangibly get out of the process. We know content teams are busy, and going through a review can feel like adding more work to the pile. How can we help prioritize meaningful progress here?

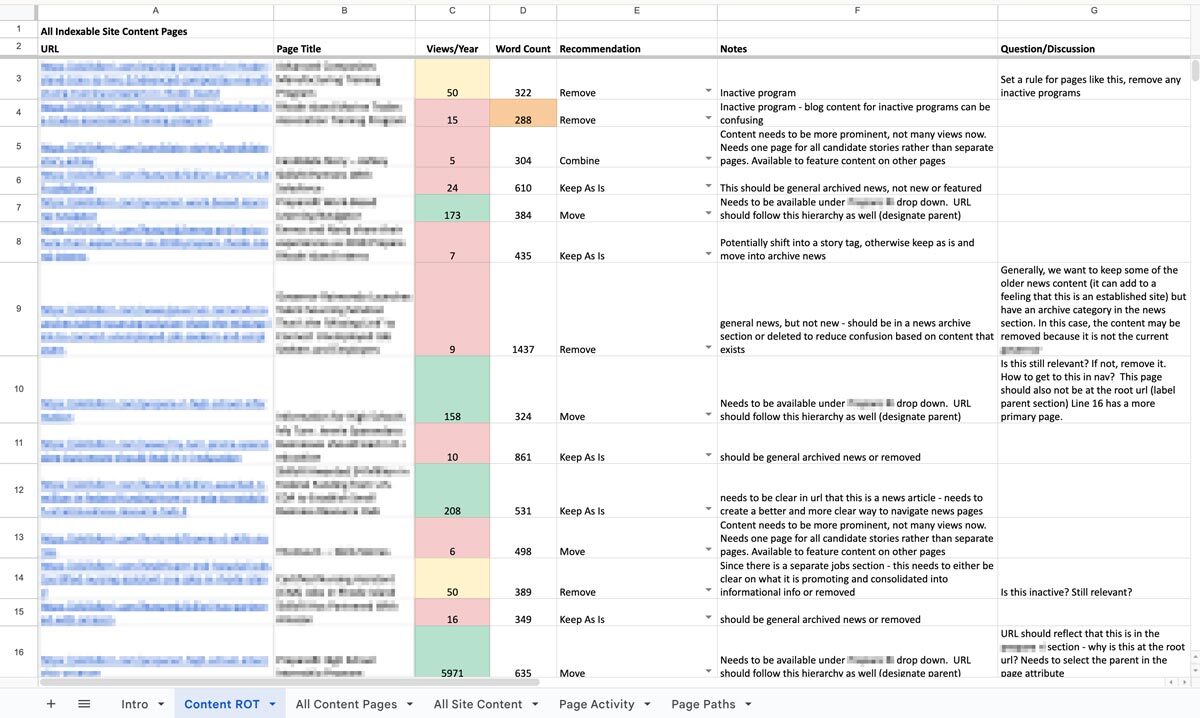

The big outcome is one of my personal favorites: a clean, annotated, actionable spreadsheet. Specifically, we’ll put together an audit of your content with links, page titles, notes on whether the content falls into any of the three ROT categories, and what to do about it: keep, modify, combine, or delete. Depending on the tools your content team uses or what you are willing to subscribe to, we might prepare dashboards and reports directly within an app that your team can use as an ongoing progress tracker. Wherever this list of to-do’s lives, we’ll help you prioritize it so you can start ticking off the most crucial items. Depending on what we decided in early scoping agreements, we can even help work through some high-impact issues, like bulk deleting content, suggesting rewrites, and fixing broken links.

We can also set up an ongoing content hygiene plan. While a dedicated content ROT analysis is a great way to identify and work through issues, an effective content plan should prevent ROT as much as possible and reduce the need for a large effort in the future. This might involve setting up policies, practices, and tools to guide future content management. We’ll help you find ways to see the bigger picture when updating or developing new content to make sure all pieces are accounted for. And when ROT falls through the cracks, you’ll have a plan to regularly review site content, setting ahead of time the when, what, and who.

One Piece in the Puzzle of Strong Content

As we continue to inspect the quality of your website and other digital properties, we can use this ROT analysis as a jumping off point. The initial audit may lead directly into a deeper content audit to evaluate URL paths, heading usage, performance metrics, reading level, and more. As we consider reworking, combining, and cutting entire pages, we may find the need to restructure your information architecture and taxonomy structures, in part or in whole, informed by research exercises like card sorts and tree tests. Depending on what we’ve found in the existing content and how it needs to change, we might suggest changes to your content model, adding, modifying, or removing content types and the relationships between them.

A content ROT analysis is a flexible and fruitful way to take a fresh look at your content ecosystem. If you need help getting started, let us know. We’d love to dig in with you!

Contentful is no longer just an alternative CMS—it’s become a foundational platform for organizations navigating complexity, regulation, and rapid digital change. In 2026, the question isn’t what is Contentful? It’s why are so many organizations rebuilding their digital ecosystems around it? The answer lies in how digital experiences are built, managed, and scaled today.

Contentful Is Built for Systems, Not Pages

Traditional CMS platforms were designed around pages and templates. That model breaks down when content needs to move faster, live in more places, and remain consistent across teams and channels.

Contentful takes a different approach. It treats content as structured data, not static pages. That means teams create content once and deliver it anywhere—websites, apps, portals, email, or future channels that don’t yet exist.

In 2026, this isn’t a “nice to have.” It’s how modern digital platforms operate.

Composable Architecture Is Now the Default

Composable architecture has moved from trend to standard. Organizations want the freedom to choose best-in-class tools without being locked into monolithic platforms.

Contentful fits cleanly into this model. It integrates with design systems, analytics platforms, personalization tools, consent managers, and AI services through APIs—without forcing teams into rigid workflows.

This flexibility allows organizations to evolve their stack over time instead of rebuilding every few years.

AI Depends on Structured Content

AI-driven experiences are only as good as the content behind them. In 2026, organizations are using AI to support personalization, search, localization, content optimization, and automation.

Contentful’s structured content model makes this possible. Clean, well-defined content enables AI tools to understand, reuse, and adapt content accurately—without introducing risk or inconsistency.

For teams exploring AI responsibly, Contentful provides the infrastructure needed to scale with confidence.

Governance and Compliance Are Built In, Not Bolted On

For regulated and mission-driven organizations, governance isn’t optional. Publishing controls, audit trails, permissions, and review workflows are essential.

Contentful supports these needs at scale. Teams can define roles, control who edits or publishes content, and maintain visibility into changes across environments. This level of governance is critical in industries like healthcare, legal, finance, and the public sector.

In 2026, compliance isn’t something teams add later—it’s designed into the platform from day one.

Marketing and Development Work Better Together

One of Contentful’s biggest advantages is how it aligns marketing and engineering teams. Developers maintain design systems and integrations. Content teams manage content without breaking layouts or workflows.

This separation of concerns reduces friction, speeds up delivery, and minimizes production errors—especially as digital ecosystems grow more complex.

Ready to explore what Contentful could do for your organization? Whether you’re evaluating platforms, planning a migration, or looking to optimize your current setup, Oomph can help you build a content infrastructure designed for the long term. Let’s talk about your next move.

Why Organizations Move to Contentful Now

Organizations typically migrate to Contentful when legacy systems start holding them back. Common triggers include:

- Slow publishing workflows

- Heavy developer dependency

- Difficulty scaling across channels

- Growing compliance requirements

- The need to support AI and personalization

In 2026, Contentful isn’t chosen because it’s new. It’s chosen because it’s resilient.

For organizations new to the platform, getting started doesn’t have to mean a complete rebuild. Oomph’s Contentful Kickstart Package helps teams move from decision to deployment with a structured, low-risk approach—giving you the foundation to scale as your needs evolve.

The Takeaway

Contentful has evolved alongside the modern digital landscape. It’s not just a CMS—it’s a content platform designed for scale, governance, and change.

For organizations planning beyond their next website launch and toward long-term digital maturity, Contentful provides the flexibility and confidence needed to move forward.

Ready to explore what Contentful could do for your organization? Whether you’re evaluating platforms, planning a migration, or looking to optimize your current setup, Oomph can help you build a content infrastructure designed for the long term. Let’s talk about your next move.

For many organizations, privacy regulations like GDPR and CCPA seem like distant legal concerns rather than operational priorities. In practice, however, websites serve as the primary point of data collection—making compliance far more relevant than most teams assume. If your site collects user data in any form, privacy compliance isn’t optional.

Understanding When GDPR and CCPA Apply

GDPR governs the collection of personal data from users in the European Union, while CCPA applies to personal data collected from California residents.

Crucially, these regulations are triggered by user location, not company headquarters. A U.S.-based organization serving a global audience may be subject to both frameworks.

Why Websites Are at the Center of Compliance

Most modern websites collect data through multiple channels:

- Contact and intake forms

- Newsletter subscriptions

- Analytics and tracking tools

- Cookies and personalization technologies

- Third-party embeds and integrations

Each of these collection points creates compliance obligations around consent, transparency, and user control.

Moving Beyond Cookie Banners

Meaningful compliance extends well beyond footer disclaimers. Effective privacy management requires:

- Clear consent and opt-out mechanisms

- Transparent communication about data usage

- The ability to update policies efficiently

- Controlled publishing workflows

- Comprehensive auditability for content and data modifications

Legacy CMS platforms frequently lack the flexibility and governance capabilities needed to meet these requirements.

The Role of Your CMS in Privacy Compliance

Your content management system is instrumental in supporting privacy obligations. A modern, composable CMS enables organizations to:

- Decouple content from data logic

- Integrate consent and privacy tools seamlessly

- Manage access and publishing permissions effectively

- Deploy compliance updates across all channels instantly

- Minimize risk by limiting unnecessary data exposure

For regulated and mission-driven organizations, CMS limitations can translate directly into compliance vulnerabilities.

The Cost of Non-Compliance

While regulatory penalties are a concern, the greater risk lies in eroding user trust.

Today’s users expect transparency and control over their personal information. Organizations unable to deliver on these expectations risk damaging their reputation with customers, donors, and partners.

Final Thoughts

GDPR and CCPA represent more than legal obligations—they present fundamental digital experience challenges. Websites built on flexible, compliance-ready platforms are better positioned to adapt as privacy expectations continue to evolve.

In today’s environment, privacy compliance shouldn’t be viewed as a constraint. It’s an essential component of delivering a modern, trustworthy digital experience.

Need help ensuring your website meets modern privacy standards? Our team specializes in building compliance-ready digital platforms that protect your users and your organization. Let’s discuss your requirements.

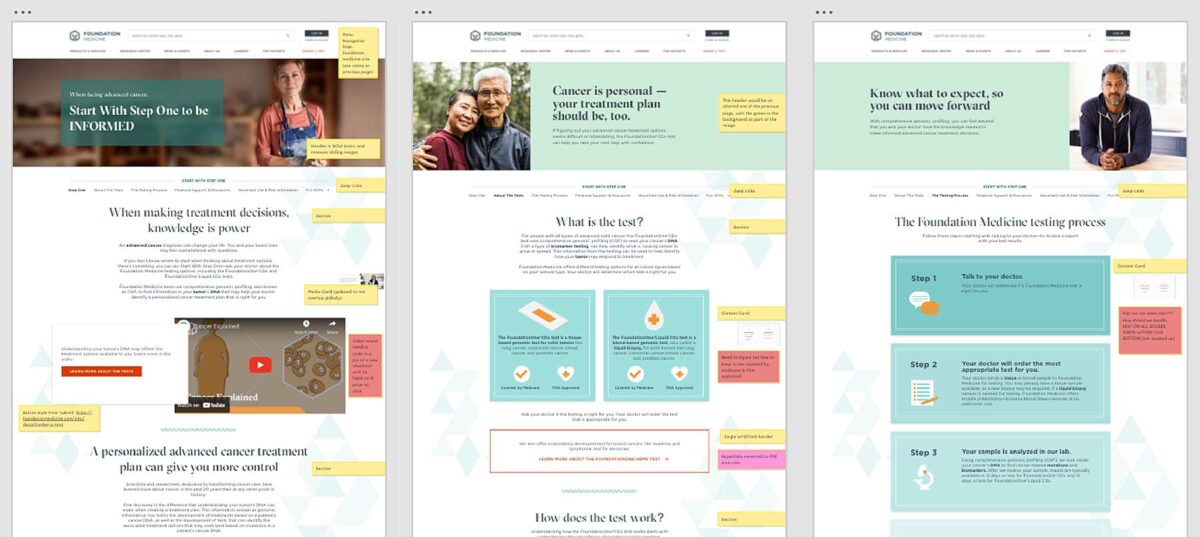

The Brief

Less can be Much More

The previous Foundation Medicine (FMI) team built their marketing platform on a decoupled content management architecture. Oomph has used decoupled and micro-service architecture for projects such as Leica Geosystems and Wingspans.

But decoupled is not right for every organization, and a decoupled approach can be architected in many different ways. FMI had found their implementation created more headaches than high-fives:

- The flat hierarchy of Contentful created 158 content types, most of which were not useful for creating content.* Therefore, authors had to sift through long lists to find the actual content they needed to edit or create.

- Not everything in their front-end templates was accessible through the CMS. (That would have created even more content types!) So the team was beholden to an engineer to make text edits within some areas.

- Previewing new pages before publishing was not added to their implementation. Authors struggled to predict how content in the admin would display as the published page, and spent much time toggling back and forth.

In short, publishing new content or making content edits was too slow. Responding quickly to changing market conditions or new announcements in the cancer treatment space was not possible, eroding reliance and trust in what should be a cutting-edge brand.

* It should be noted that Contentful uses a “Content Type” for almost everything, from content to taxonomy to design components.

The Approach

Moving away from Decoupled

Based on their current pain points, Oomph verified that switching to a traditional “monolithic” architecture would solve their problems and provide additional benefits:

- Reduce cognitive overload and maintenance overhead by drastically reducing content types

- Empower authors to update all content anywhere

- Accelerate content publishing with an accurate visual preview

Oomph completed an extensive audit and reduced content types from 158 down to 30. We created a tight, flexible system in Drupal of just 14 content types — news item, event page, product page, etc. — and 16 design components — text blocks, accordions, etc.

How did we achieve such a reduction? Our consolidation approach moved from fewer specific options (one thing for a small number of very specific pieces of content) to flexibility within general ones (one thing to support many pieces of content with specific options).

Retaining Key Functionality

Foundation Medicine exists to help people with cancer and those who treat them. To accomplish this, the website features intricate tools for providers to navigate essential cancer resources and patients to find a specialist. None of these tools were compromised by switching to Drupal. In fact, with efficiency gains and more timely content governance, these resources became more valuable.

The Results

Connecting Providers with Genomic Data and Patients with Personalized Care

The upgrade to Foundation Medicine’s digital platform has been invisible by design. The brand and the visuals were performing well for their business and were comfortable for their audience. The outward appearance didn’t need an update, but the internal workflows that support continued trust certainly did.

The Foundation Medicine team now has the autonomy to make content updates quickly, the architecture and design components to confidently curate each page build, and the infrastructure to create clear and consistent content — a win for the team and for the many people who turn to Foundation Medicine in their time of need.

Page Views

Scroll Depth

User Engagement

In recent months, Generative Engine Optimization (GEO) has been gaining attention, often positioned as the next evolution beyond traditional Search Engine Optimization (SEO). For some clients, this presents an exciting opportunity to rethink and restructure their digital content. For others, it can feel overwhelming, raising more questions than answers. As AI-powered search tools like ChatGPT, Perplexity, and Gemini change how people discover content online, clients increasingly ask: What is GEO, and how can we prepare our sites for it?

The following handy Q&A guide aims to demystify Generative Engine Optimization (GEO), explain why it matters, and provide practical steps your team can take to get started.

Q: What is GEO and how is it different from SEO?

A: GEO stands for Generative Engine Optimization. While SEO (Search Engine Optimization) focuses on getting your content to rank in traditional search engines like Google (via keywords, backlinks, and site performance), GEO focuses on getting your content mentioned, referenced, summarized, or cited in AI-generated answers from tools like ChatGPT, Gemini, and Perplexity.

Think of SEO as getting your content listed, whereas GEO is about making your brand and its content the answer.

Q: Why should my organization care about GEO?

A: AI platforms are rapidly becoming the first stop for users looking for answers, especially younger audiences and professionals. If an answer appears via Gemini on the top of a Google search, fewer people may scroll further down the page to look for other sources. They got the answer they needed from just one search. If your content isn’t optimized for these tools, you’re missing out on certain traffic data, visibility, and an opportunity to build trust.

In 2026, ChatGPT alone sees over 4.5 billion visits per month, and Perplexity handles nearly 500 million monthly queries.

Q: How is GEO impacting my site’s analytics?

A: Likely a lot. Generative engines often summarize content without requiring a click. That means you may see fewer impressions and clicks, even if your content is powering the AI’s answer. Most websites are seeing direct traffic declining across the board. With that said, users who do click through to sites are often engaging more deeply, leading to longer session durations and higher conversion rates.

Because of this, it’s crucial to learn these new patterns and recognize them within your site’s analytics by setting up new reports.

Q: How do AI engines choose which content to cite?

A: AI tools evaluate a number of factors, with the most important being:

- Authority: Are you a trusted source? Do you have backlinks, credentials, or media citations?

- Structure: Do you use schema markup, headings, and clear Q&A formatting?

- Freshness: Is your content updated regularly?

- Relevance: Does your content align with how users ask questions in natural language?

Each tool has its own algorithm, but clear, factual, structured content with recent updates from trusted sources performs best.

Q: What kind of content works best for GEO?

A: Content that answers questions directly, especially with a conversational tone, tends to work well. Additionally, you want your content to explain not just the what, but also the why and how, since generative engines often expand on user intent. Content structures that perform well for GEO include:

- Q&A sections

- “Top” or “Best” lists (Examples: Top Restaurants in Providence, Rhode Island or Best fall events in California)

- Evergreen guides that are updated annually